Reproducibility in Scientific Computing

What is reproducibility?

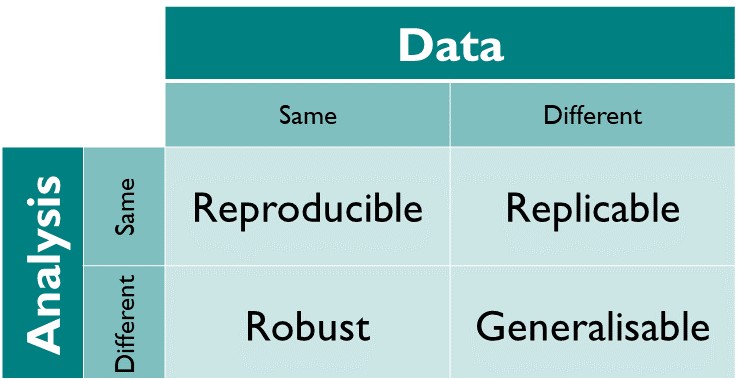

In scientific research, a result is:

We will use the definitions used in The Turing Way

What is reproducibility?

For a result to be reproducible, it should be possible for someone else to:

- Access your code

- Obtain all the relevant data

- Run the analysis defined by your code on the data and get the same result

Any time, anywhere!

Why is reproducibility important?

In the context of scientific computing/analysis, we want to be able to:

- Verify our own results

- Verify the results of others

By making our work reproducible, we ensure that both these things are not just possible, but straightforward!

Reproducibility in Earth sciences

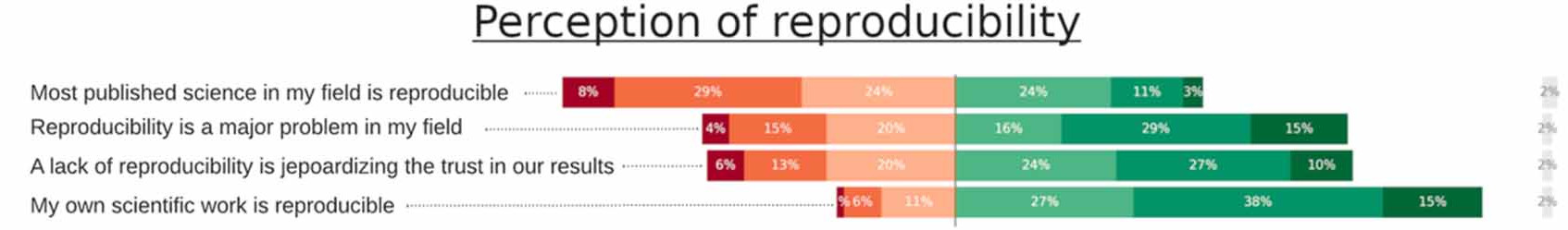

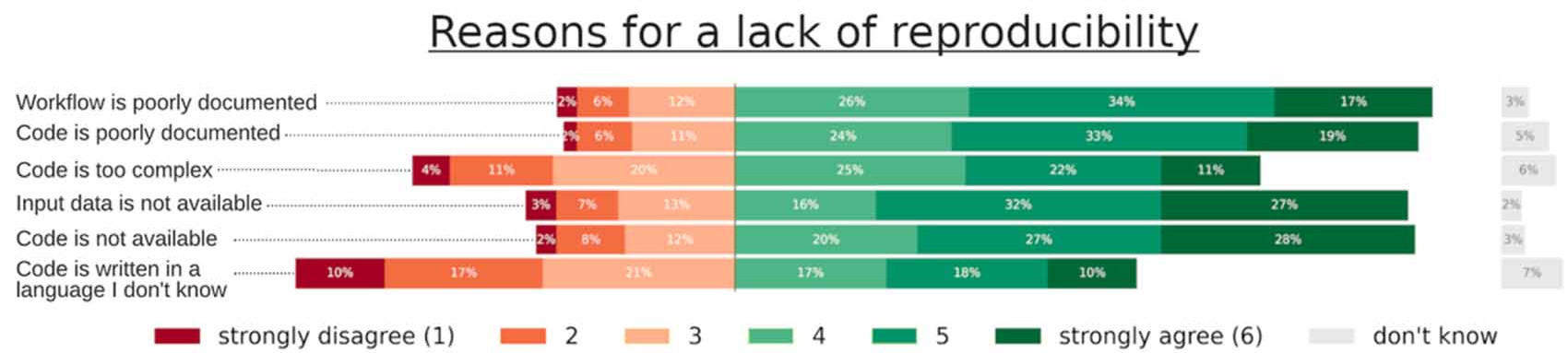

Poll of researchers in Earth sciences:

Reproducibility in Earth sciences

Other findings:

- 82% of researchers are self taught programmers

- 40% do not know who legally owns the code they are writing

- More than 35% of respondents say it can take 2-3 weeks to train a new PhD with the research software used by the group

- 23% said up to one year, 8% said more than a year!

Additional benefits

- Reproducibility is not just for scientific results

- By making our software more reproducible, we also get to reap the benefits!

Safely implement changes

- Automation and testing help catch mistakes before they become an issue

Rerun analyses

- Create reusable pipelines

- Untangle data from code so analysis can run on different inputs

Faster onboarding

- Better documentation on how to use the software

- Relevant metadata present - no hunting down dependencies

Better collaboration

- Version control for keeping track of code history

- Accessibility of code and data

Where do we go from here…

Throughout the rest of this session, we will walk through the steps that we can take to go from an ad hoc collection of scripts into a reproducible scientific workflow!

Reproducibility must haves:

- Version Control

- READMEs

- Licenses

- Automation

- Testing

Version Control

Version Control

- The first thing we should do is move our project into version control (VC)

- This way we never lose the original state of the project

- We can then try things without worrying about breaking anything!

- This will also benefit any later development, so the sooner the better

What to add to VC

- DON’T do this:

- Our repository should only contain:

- Code/scripts

- Documentation

- Metadata

- i.e. just text files

There will be some exceptions to this rule, but for the vast majority of cases it will be true.

What to add to VC

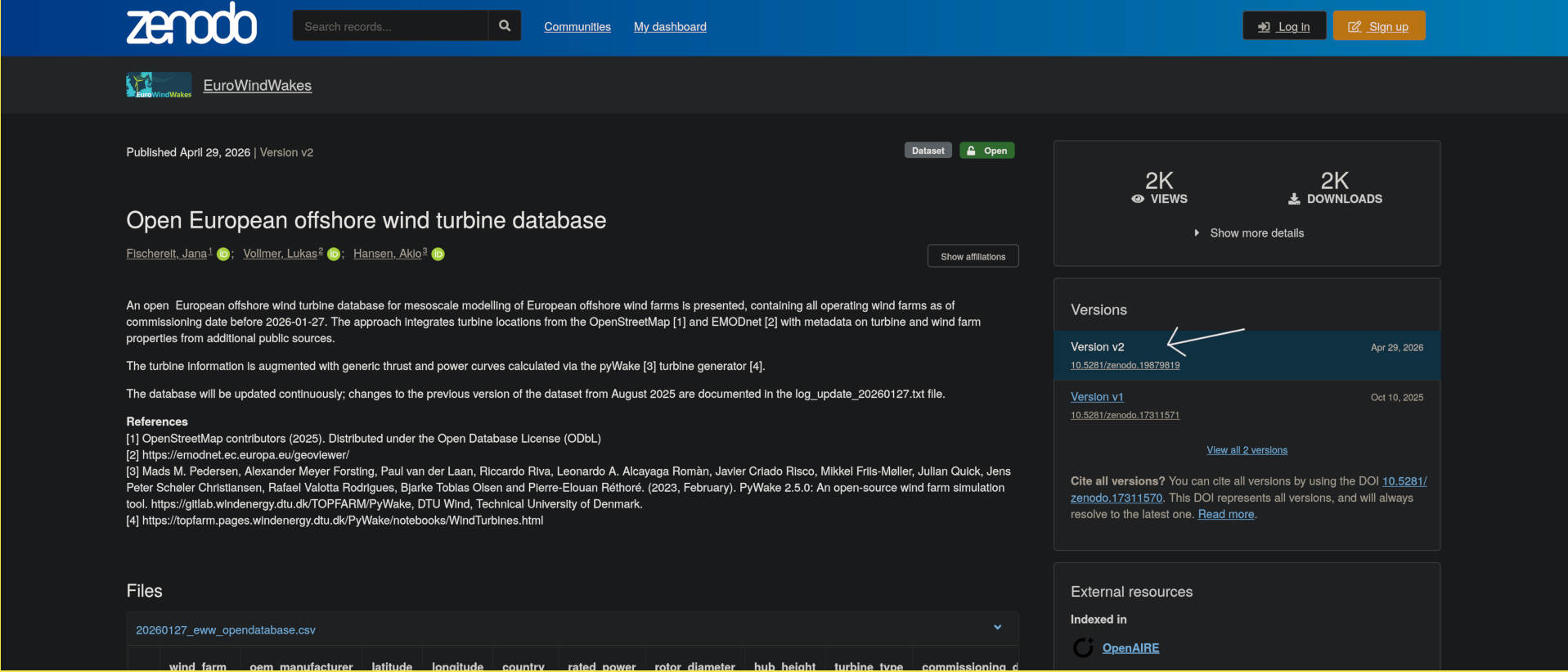

- Large datafiles should be hosted separately (e.g. on Zenodo)

- External dependencies should be declared

- e.g. link to Zenodo dataset in docs and code

- Use .gitignore to automatically ignore any unwanted files

- e.g. build outputs

Aside - testing with worktrees

- git worktrees are like “local clones” of a repository

- Create a worktree:

- Will make a new directory, with only files that are tracked

- Can use as a cleanroom to ensure all dependencies are there

- For more info:

git worktree add --help

What to do next?

- The repository can then also be hosted a remote service (e.g. GitHub, GitLab, Codeberg, Bitbucket)

- This will make collaboration with other people a lot easier!

- It will also mean that any work done can be accessed by collaborators

Dependencies

Dependencies

- All software has dependencies

- Some are more obvious than others:

- Data/input

- Packages/libraries e.g. numpy, Eigen

- System libraries

- Compiler/Interpreter

- If your code can’t run without it, it’s a dependency!

How to discover dependencies

- Some dependencies may be “implicit”

- For example, you may have a library installed on your system

- Since the code “just works”, you may not be aware of the dependency

- To find these, try running on a different system (or multiple) and see what breaks

How to declare dependencies

- List them in a tracked file in the repository

- e.g. add a “Dependencies” section to your README.md

- Specify:

- Versions of each dependency e.g. numpy 2.3.9

- Where/how to aquire the dependency

How to declare data dependencies

- Links to publicly accessible data dependencies

- Zenodo or some other long term solution

- Reference specific versions of the data

- Ideally automate the fetching of the data

Dependency metadata

- There are automated ways of resolving dependencies

- Usually language/tool specific

- Some tools automatically update dependency metadata

- e.g. Rust’s cargo, Julia’s Pkg, uv for Python

- Project file: Depencies and compatible versions

- Lock file: Write exact version (plus other metadata e.g. source) of every dependency you are using

- Important to track both - lock files record the exact environment you use

System dependencies - Conda

- Python centric package manager

- Can be used for installing python packages

- Also able to manage environments

- useful for per-project dependencies

- can install system dependencies

- even different python versions!

- Available on most HPC systems (usually as Miniconda)

- Can export environments to text-based files

System dependencies - Containers

- Containers package up code and all dependencies

- Virtualised operating system

- Faster than a virtual machine with many of the benefits

- Examples:

- Docker (Closed source but popular)

- Podman (Open source alternative)

- Apptainer (HPC focused/compatible)

- Portable and cross-platform

System dependencies - Nix/Guix

What they have in common:

- Declarative package managers

- Use pure functional languages to create environments

- same inputs = same outputs

- Packages are hashed

- can lookup if package already exists locally

- Publicly hosted and versioned package repository

- All packages have defined and unchanging dependencies

- Handles all dependencies automatically

- even linked libraries, so no hidden dependencies!

How they differ:

- Nix language vs Guile scheme

- Guix package repository only hosts fully open source packages

- Can be difficult when using vendor-locked software e.g. CUDA

Automation

Automation

Automation is important in scientific computing:

- It is common to have different workflows using one piece of software

- Many different research artifacts may be produced from one analysis/dataset

- Figures, data files, statistical results etc.

- Different people may need/want to create the same artifacts

By automating tasks as much as possible, we make it easier to perform the actions and produce the results we want

Why automate?

There are many benefits to automating workflows:

- Faster

- Code as instructions

- No need to write how to do X

- Can be tested!

- Flexibility

- Improved control

- Extendable

- Less chance of mistakes

- Computers are good at following instructions

Automation and reproducibility

Automating workflows tracks how research artifacts were actually produced

Makes it easier for others to use and verify our work

Results are more trustworthy

How to automate

- Bash scripts

- Common on HPC, works on all Linux machines

- Can easily use command line programs

- More complicated scripts can become verbose

- All variables are strings (lots of footguns)

- Python scripts

- Much more “batteries included” e.g. argument parsing is built into the core library

- Access to python libraries

- Not always available, but will run on any machine with python installed

- Not as easy to invoke external programs

- Scripts should be written like any other code!

Testing

Testing

- Important to test code

- Check

- that code does what it should

- that results of code do not change with code changes

- that the code still works after dependency changes

- that the code works for edge cases

Testing and Reproducibility

Tests are your “reproducibility insurance”

Flags (hopefully) when something is dodgy in your codebase

- Find the problems before someone else does!

Improve reliability and trustworthiness

A very short intro to testing

Unit tests

- Test the smallest logical unit of the code

- Ensure each component works as intended

- Test functions for known results

- Compare to previously produced results

Integration tests

- Test that components work together

- Try to have a range of complexity of tests

- Can use previous results to validate model

- Ensure no regression of results

Adding tests to a project

- Often we inherit large projects with no unit tests

- How do we improve test coverage in this case?

Adding tests to a project

- Create integration tests - use previous results or create “golden outputs”

- Identify and extract parts of the code which can be split apart

- Create unit tests for the new functions

- Run the integration tests to ensure results have not changed

- Repeat 2-4 until all code has unit tests

- Whenever you change a part of the code, try to use this method

- Code coverage will slowly improve, with less extra work

Automating tests (CI etc)

- Automate testing to ensure tests pass for every commit

- Also useful for tests that can take a long time/need lots of resources

- If hosting code on e.g. GitHub, GitLab etc, can use Continuous Integration (CI)

Documentation

Documentation

- Not all information can be conveyed in code

- We need to tell other people how to use our projects

- And sometimes ourselves!

- Documentation covers anything outside of the code/metadata

README

- Markdown file at the project root

- Should contain:

- Description of project

- Dependencies

- Instructions on building/running

Generating Docs

- Use tools that generate docs from source code

- Single source of truth

- Comments/Docstrings embedded in code

- Reduce separation between code and docs

FAIR and FAIR4RS Principles

The FAIR principles were first introduced for data, and later adapted for research software (FAIR4RS) 1.

FAIR stands for

Findable

Software, and its metadata, are easy for humans and machines to find.

- Cite your software and data in your papers (DOIs).

- Document which results you got with which software and data version.

- Use version control.

- Document your data and software.

Accessible

Software, and its metadata, are retrievable via standardised protocols.

- Version controlled, documented and identifiable.

- Ideally, software and data are open source.

- Use a permissive license.

Interoperable

Software interoperates with other software by exchanging data and/or metadata, and/or through interaction via a application programming interfaces (APIs), described through standards.

- Provide clear and well documented interfaces.

- Avoid reinventing the wheel - use standards. (There have been clever people before you…)

Reusable

Software is both usable (can be executed) and reusable (can be understood, modified, built upon, or incorporated into other software).

- Again: Documentation, licenses, standards.

- Build your software in a modular way.

Reproducibility Initiatives

Efforts to improve software/research reproducibility

Various groups and organisations work for better reproducibility.

Conferences and journals start to ask for software, data etc. to back up research findings.

Software sustainability and research software engineering have become a thing (internationally).

But still not widely known outside of the bubble!

![]() UK Reproducibility Network

UK Reproducibility Network

Peer-led consortium within the UK, international networks

National Steering Group, local and institutional groups

Training, events, engaging with stakeholders

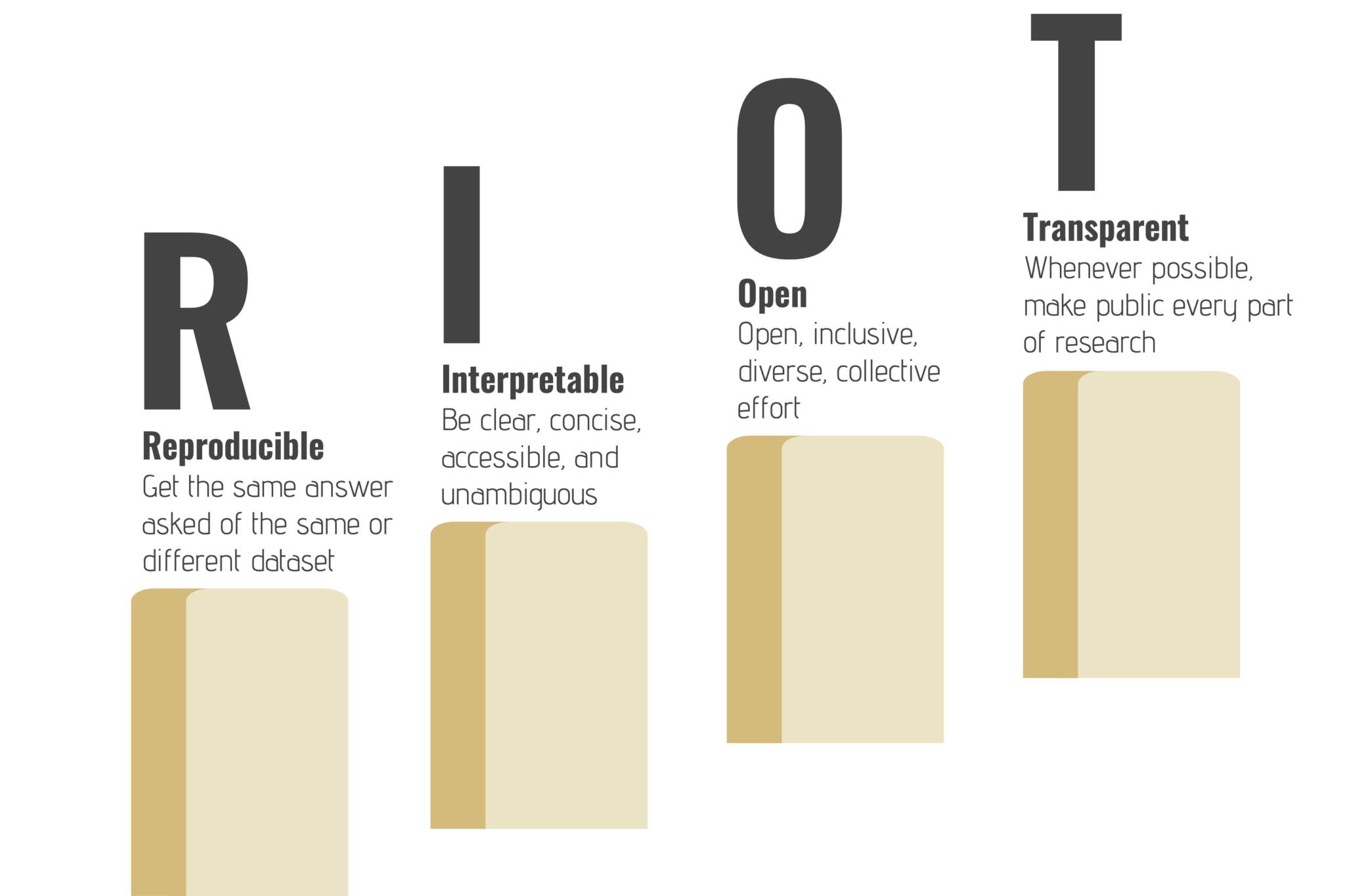

![]() RIOT Science

RIOT Science

- Groups at universities (mostly Psychology, mostly UK)

- Conferences, events, seminars open to everyone

The Turing Way

- Handbook

- Community

- Collaboration

![]() ACM Reproducibility Badges

ACM Reproducibility Badges

![]() SC Reproducibility Initiative

SC Reproducibility Initiative

SC (formerly Supercomputing), The International Conference for High Performance Computing Networking, Storage, and Analysis

Initiative started in 2015, Artifact Descriptions (ADs) optional for the first years -> used in Student Cluster Competition

Then gradually made mandatory for more categories/prizes Computational Results Analysis (CRA) -> Artifact Evaluation (AE) appendix still optional

AD/AE committee evaluates appendices and recommends ACM badge awards (IEEE badges seem to have vanished)

Reproducibility challenge introduced 2021

![]() ReproHack

ReproHack

Challenge: Reproduce the results of a paper in one day!

Started in 2016 and 2017 as satellite events of OpenCon (inspired by a course by Owen Petchey)

Developed further by Anna Krystalli in her SSI fellowship

More events, a team formed, remote ReproHacks became a thing….

ReproHack Hub launched in 2021

- Material and checklists for organisers

- Paper database

- Evaluation forms

- Events listing

- Support through ReproHack Slack

And more!

JOSS

ReScience C

CODECHECK

ML Reproducibility Challenge

Climate Informatics Reproducibility Challenge

…

Conclusion/Outlook

Reproducibility is important

Primary benefits: - Confidence in scientific results - Peer review/cross analysis

Additional benefits: - Allows for code resuse - Better collaboration

Ingredients for reproducibility:

- Version Control

- Dependency Metadata

- Public Accessibility

Even better if

- Testing for:

- Verification

- Regression checks

Make it easy!

- When starting from scratch, much easier to implement these as you go

- For a large project:

- Add to VC

- Document dependencies

- Follow best practice for new code

- Implement small improvements whenever modifying

UK Reproducibility Network

UK Reproducibility Network RIOT Science

RIOT Science ACM Reproducibility Badges

ACM Reproducibility Badges SC Reproducibility Initiative

SC Reproducibility Initiative ReproHack

ReproHack

Comments